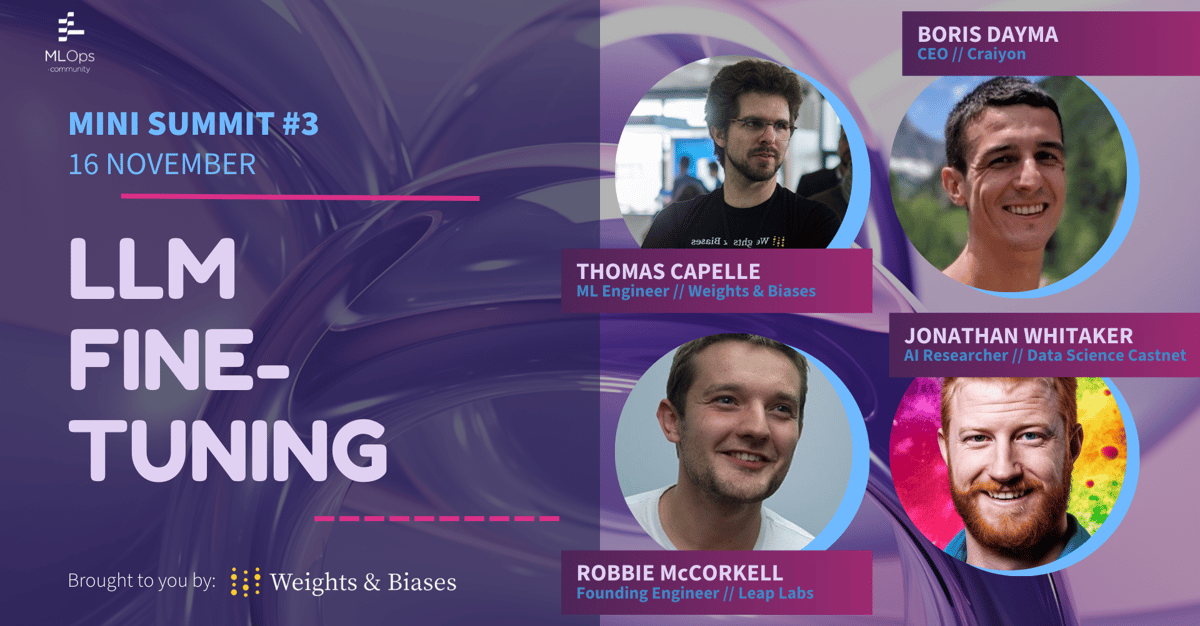

MLOps Community: LLMs Mini Summit

Unlock the secrets of maximizing language models' potential in our exclusive sessions that traverse the diverse landscapes of language model fine-tuning. Dive deep into the intricacies of the fine-tuning process, explore the best practices crucial for training large models, and witness real-world applications through the lens of the prestigious Kaggle competition.

Our sessions promise an insightful exploration of new evaluation paradigms, shedding light on how to truly comprehend what these models learn. Discover the answers to fundamental questions and challenges in fine-tuning, as seasoned experts share practical experiences, invaluable tips, and unique insights.

Don't miss this opportunity brought to us by Weights & Biases to expand your knowledge and harness the full potential of Language Models! Join us to gain an enriched understanding and learn to leverage these models effectively. Register now and be at the forefront of cutting-edge advancements in Language Model Fine-Tuning!

Speakers

Agenda